...

Overfitting and test evaluation of a MVA model

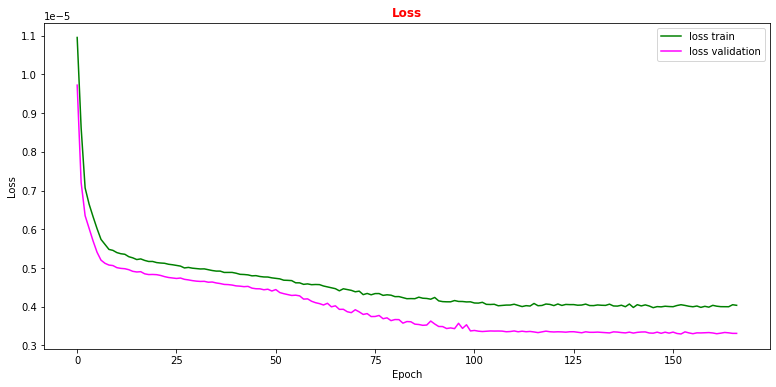

The loss function and the accuracy metrics gives us a measure of the overtraining (overfitting) of the ML algorithm. Over-fitting happens when a ML algorithm learns to recognize a pattern that is primarily based on the training (validation) sample and that is nonexistent when looking at the testing (training) set (see the plot on the right side to understand what we would expect when overfitting happens).

Artificial Neural Network performance

Let's see what we obtained from our ANN model training making some plots!

# plot the loss fuction vs epoch during the training phase # the plot of the loss function on the validation set is also computed and plotted plt.rcParams['figure.figsize'] = (13,6) plt.plot(history.history['loss'], label='loss train',color='green') plt.plot(history.history['val_loss'], label='loss validation',color='magenta') plt.title("Loss", fontsize=12,fontweight='bold', color='r') plt.legend(loc="upper right") plt.xlabel('Epoch') plt.ylabel('Loss') plt.show()

Question to student: Why does the validation loss decrease more than the training loss?

Hint: remember we used several callfunctions to train our ANN.