If your use case has needs related to parallel computing, i.e. multinode-MPI or needs special computing resources such as Intel Manycore or NVIDIA GPUs, probably you need to access the CNAF HPC cluster, which is a small cluster (about 100TFLOPS) but with special HW available [9].

This cluster is accessible via the LSF batch system for which you can find instructions here [10] and Slurm Workload Manager which will be introduced hereafter. Please keep in mind that the LSF solution is currently in its EOL phase and will be fully substituted by Slurm Workload Manager in the near future.

In the following, few details on the account request and first access are given.

If a user already has an hpc account, it can skip this paragraph. Otherwise, some preliminary steps occur.

Download and fill in the access authorization form. If help is needed, please contact us at hpc-support@lists.cnaf.infn.it.

If you don't have an INFN association, please attach a scan of a personal document of yours (passport or ID card for example).

Send the form via mail to sysop@cnaf.infn.it and to user-support@lists.cnaf.infn.it

Once the account creation is completed, you will receive a confirmation on your email address. In that occasion, you will receive the credentials you need to access the cluster as well.

First of all, it is necessary SSH into bastion.cnaf.infn.it with the own credentials.

Thereafter, access the HPC cluster logging into ui-hpc.cr.cnaf.infn.it from bastion. You have to use the same credentials you just used to log into bastion.

Your home directory will be: /home/HPC/<your_username>. The home folder is shared among all the cluster nodes.

No quotas are currently enforced on the home directories and about only 4TB are available in the /home partition. In case you need more disk space for data and checkpointing, every user can access the following directory:

/storage/gpfs_maestro/hpc/user/<your_username>/

which is on a shared gpfs storage. Please, do not leave huge unused files in both home directories and gpfs storage areas. Quotas will be however enforced in the near future.

For support, contact us (hpc-support@lists.cnaf.infn.it).

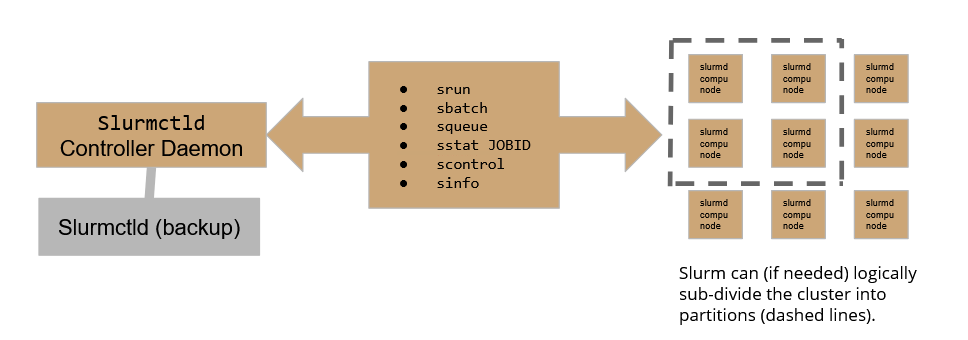

Slurm workload manager relies on the following scheme:

Where the Slurmctld daemon plays the role of the controller, allowing the user to submit and follow the execution of a job, while Slurmd daemons are the active part in the execution of jobs over the cluster. To assure high availability, A backup controller daemon has been configured to assure the continuity of service.

On our HPC cluster, there are currently 4 active partitions:

Please be aware that exceeding the MaxTime enforced will result in the job being held.

If not requested differently at submit time, jobs will be submitted to the _int partition. Users can choose freely what partition to use by configuring properly the batch submit file (see below).

You can check the cluster status using the sinfo -N command which will print a summary table on the standard output.

The table shows 4 columns: NODELIST, NODES, PARTITION, AND STATE.

For instance:

-bash-4.2$ sinfo -N NODELIST NODES PARTITION STATE hpc-200-06-05 1 slurmHPC_int* idle hpc-200-06-05 1 slurmHPC_short idle hpc-200-06-05 1 slurmHPC_inf idle hpc-200-06-05 1 slurm_GPU idle hpc-200-06-06 1 slurmHPC_short idle hpc-200-06-06 1 slurmHPC_int* idle hpc-200-06-06 1 slurmHPC_inf idle [...] |

To work with a batch, the user should build a batch submit file. Slurm accepts batch files that respect the following basic syntax:

#!/bin/bash # #SBATCH <OPTIONS> (...) srun <INSTRUCTION> |

The #SBATCH directive teaches Slurm how to configure the environment for the job at matter, while srun is used to actually execute specific commands. It is worth now getting acquainted of some of the most useful and commonly used options one can leverage in a batch submit file.

In the following, the structure of batch jobs alongside with few basic examples will be discussed.

srunIn order to run instructions, a job has to be scheduled on Slurm: the basic srun command allows to execute very simple commands, such as one-liners on compute nodes.

The srun command can be also enriched with several useful options:

srun -N5 /bin/hostnamewill run the hostname command on 5 nodes;

If the user needs to specify the run conditions in detail as well as running more complex jobs, the user must write down a batch submit file.

A Slurm batch job can be configured quite extensively, so shedding some light on the most common sbatch options may help us configuring jobs properly.

#SBATCH --partition=<NAME>#SBATCH --job-name=<NAME>#SBATCH --output=<FILE>#SBATCH --nodelist=<NODES>node[1:5] and we specify --nodelist=node[1-2], our job will only use these two nodes.#SBATCH --nodes=<INT> #SBATCH --ntasks=<INT>#SBATCH --ntasks-per-node=<INT>#SBATCH --time=<TIME>#SBATCH --mem=<INT>In the following we present some advanced SBATCH options. These ones will allow the user to set up constraints and use specific computing hardware peripherals, such as GPUs.

#SBATCH --constraint=<...> --constraint=IB (use forcibly Infini-Band nodes) or --constraint=<CPUTYPE> (use forcibly CPUTYPE CPUs).#SBATCH --gres=<GRES>:<#GRES>--gres=gpu:<INT> where <INT> is the number of GPUs we want to use.#SBATCH --mem-per-cpu=<INT>In the following, few utilization examples are given in order to practice with these concepts.

A user can retrieve information regarding active job queues with the squeue command for a synthetic overview of Slurm job queue status.

Among the information printed with the squeue command, the user can find the job id as well as the running time and status.

In case you are running a job, you can have a detailed check on it with the command

sstat -j <jobid>

where jobid is an id given to you by Slurm once you submit the job.

Below some examples of submit files follow to help the user get comfortable with Slurm.

See them as a controlled playground to test some of the features of Slurm.

#!/bin/bash # #SBATCH --job-name=tasks1 #SBATCH --output=tasks1.txt #SBATCH --nodelist=hpc-200-06-[17-18] #SBATCH --ntasks-per-node=8 #SBATCH --time=5:00 #SBATCH --mem-per-cpu=100 srun hostname -s |

To execute this script, the command to be issued is

sbatch <executable.sh>

namely

-bash-4.2$ sbatch test_slurm.sh Submitted batch job 23 |

Then, to see information about a single job use:

-bash-4.2$ sstat -j 23

and the output should be something like (hostnames and formatting may change):

-bash-4.2$ cat tasks1.txt hpc-200-06-17 hpc-200-06-17 hpc-200-06-17 hpc-200-06-17 hpc-200-06-17 [...] |

As we can see, the --ntasks-per-node=8 option was interpreted by slurm as “reserve 8 cpus on node 17 and 8 on node 18 and execute the job on those cpus”.

It is quite useful to see how the output would look in case of --ntasks=8 was used instead of --ntasks-per-cpu. In that case, the output should be exactly:

hpc-200-06-17 hpc-200-06-18 hpc-200-06-17 hpc-200-06-18 hpc-200-06-17 hpc-200-06-18 hpc-200-06-17 hpc-200-06-18 |

As we can see the execution involved 8 CPUs only and the payload was organized to minimize the burden over the nodes.

To submit an MPI job on the cluster, the user must secure-copy the .mpi executable over the cluster.

In the following example, we prepared a C script that calculates the value of pi and we compiled it with:

module load openmpi

mpicc example.c -o picalc.mpi

#!/bin/bash # #SBATCH --job-name=test_mpi_picalc #SBATCH --output=res_picalc.txt #SBATCH --nodelist=... #or use --nodes=... #SBATCH --ntasks=8 #SBATCH --time=5:00 #SBATCH --mem-per-cpu=1000 srun picalc.mpi |

If the .mpi file is not available on desired compute nodes, which will be the most frequent scenario if you save your files in a custom path, computation is going to fail. That happens because Slurm does not take autonomously the responsibility of transferring files over compute nodes.

On way of acting might be to secure copy the executable over the desired nodelist, which can be feasible only if 1-2 nodes are involved. Otherwise, Slurm offers a srun option which may help the user.

In this case, the srun command has to be built as follows:

srun --bcast=~/picalc.mpi picalc.mpi |

Where the --bcast option copies the executable to every node by specifying the destination path. In this case, we decided to copy the executable into the home folder keeping the original name as-is.

The submission of a python job over the cluster follows the syntactic rules of previous use-cases. The only significative difference is that we have to specify the compiler/interpreter when we call the srun command.

In the following, we show how to execute a python job over 16 CPUs of a selected cluster node.

#!/bin/bash # #SBATCH --job-name=prova_python_sub #SBATCH --output=prova_python_sub.txt #SBATCH --nodelist=hpc-200-06-05 #SBATCH --ntasks=16 #SBATCH --time=5:00 #SBATCH --mem-per-cpu=100 srun python3 trial.py |

Again, if secure-copying the executable over every node involved in our computation is an un-optimized operation, the user should add the --bcast option to the srun command.

With Slurm, we can also submit python jobs that leverage a virtual environment. This comes handy if the job needs to use packages that are not included in the standard python setup on the cluster.

In order to do so, the user has to:

These 4 steps are required only once.

Henceforth, the user can add these lines to the submission script and use the desired virtual environment:

source PATH_TO_VENV srun python myscript.py deactivate |

Complete overview of hardware specs per node:http://wiki.infn.it/strutture/cnaf/clusterhpc/home.

Ask for support via E-mail: hpc-support@lists.cnaf.infn.it.