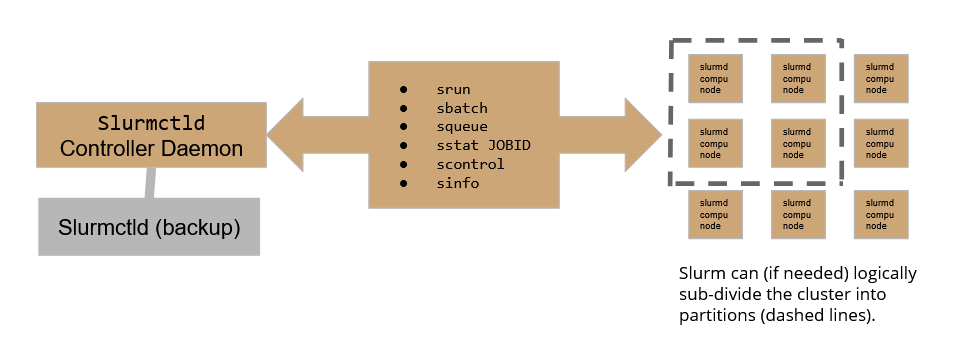

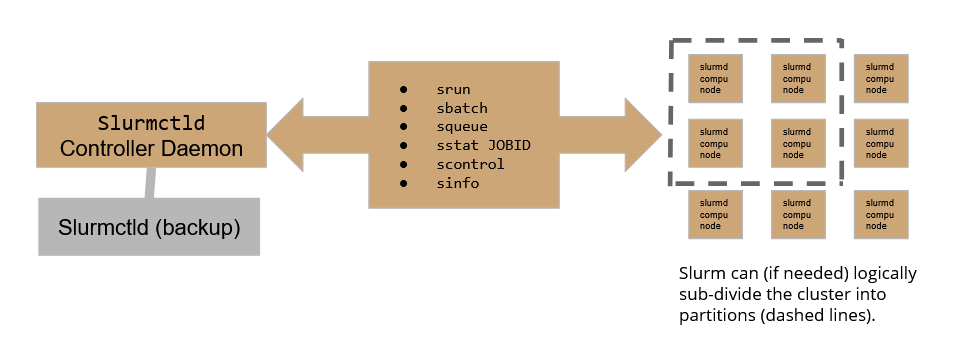

Slurm workload manager relies on the following scheme:

Where the Slurmctld daemon plays the role of the controller, allowing the user to submit and follow the execution of a job, while Slurmd daemons are the active part in the execution of jobs over the cluster. To assure high availability, A backup controller daemon has been configured to assure the continuity of service.

On our HPC cluster, there are currently 4 active partitions:

Please be aware that exceeding the MaxTime enforced will result in the job being held.

If not requested differently at submit time, jobs will be submitted to the _int partition. Users can choose freely what partition to use by configuring properly the batch submit file (see below).

You can check the cluster status using the sinfo -N command which will print a summary table on the standard output.

The table shows 4 columns: NODELIST, NODES, PARTITION and STATE.

For instance:

-bash-4.2$ sinfo -N NODELIST NODES PARTITION STATE hpc-200-06-05 1 slurmHPC_int* idle hpc-200-06-05 1 slurmHPC_short idle hpc-200-06-05 1 slurmHPC_inf idle hpc-200-06-05 1 slurm_GPU idle hpc-200-06-06 1 slurmHPC_short idle hpc-200-06-06 1 slurmHPC_int* idle hpc-200-06-06 1 slurmHPC_inf idle [...] |